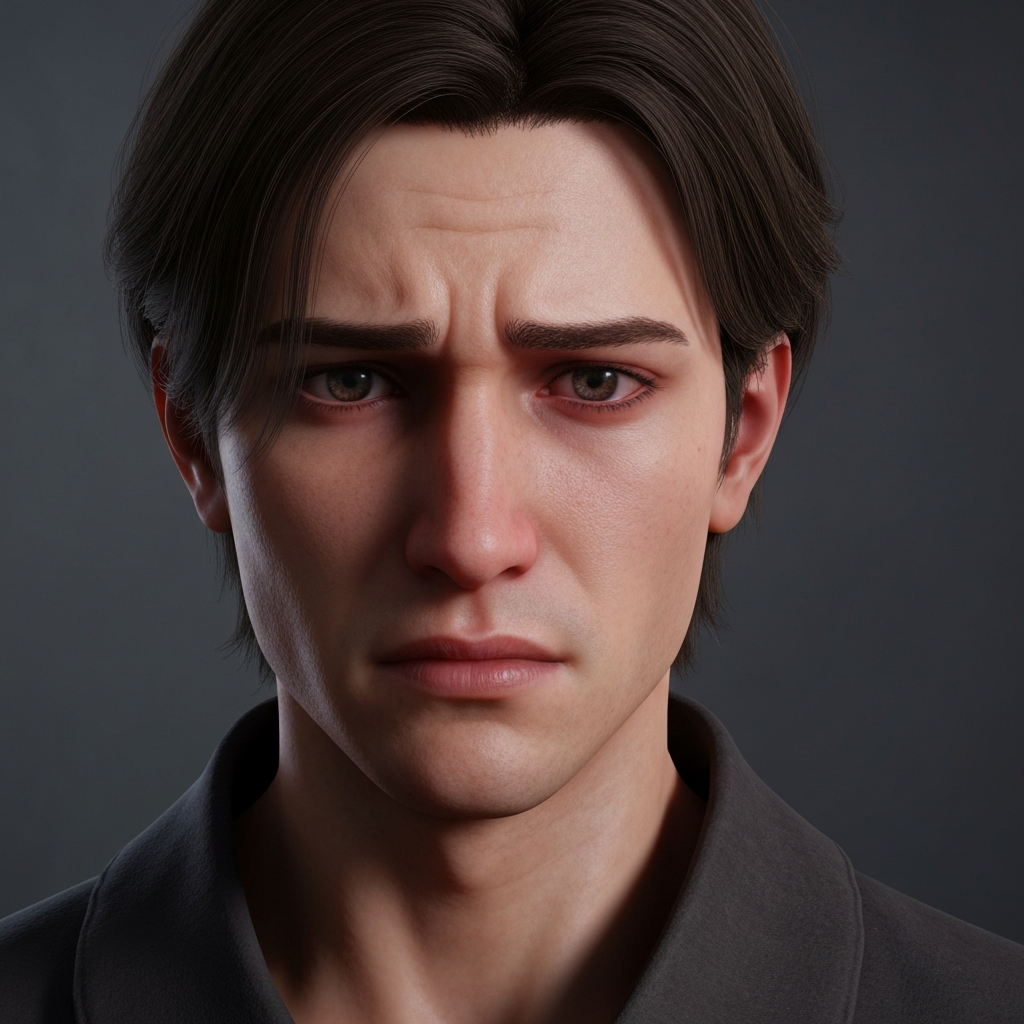

The moment that changed everything for me happened during my second playthrough of The Last of Us. Ellie cracked a joke something dumb about a pun she’d read and Joel’s face did this thing. Not a smile exactly, but a softening. The tension around his eyes relaxed for half a second before the weight of their world pulled it back. That microexpression wasn’t scripted for that specific moment. It emerged from systems working together to simulate something genuinely emotional.

I paused the game and just sat there, thinking about how far we’d come from the fixed grins of early 3D characters.

What Emotion Simulation Actually Means

When we talk about emotion simulation in game characters, we’re discussing systems that generate appropriate emotional responses based on context, relationships, and events rather than simply playing predetermined animations at scripted moments.

This goes beyond making characters look sad or happy. True emotion simulation involves internal states that influence behavior, expressions that emerge from those states, and responses that feel coherent with established personalities. A character doesn’t just display anger because the script says “be angry here.” They become angry because something happened that their personality framework interprets as anger-worthy.

The distinction matters enormously for player experience. Scripted emotions feel performed. Simulated emotions feel lived.

The Technical Architecture Behind Digital Feelings

Several approaches power modern emotion simulation, often working in combination.

Emotional state machines track discrete feelings like happiness, fear, anger, and sadness. Events trigger transitions between states, and each state influences available behaviors and expressions. This approach works reliably but can feel mechanical characters ping-pong between emotional categories without nuance.

Dimensional models treat emotion as positions on continuous scales rather than discrete states. The circumplex model, for instance, plots feelings along arousal (calm to excited) and valence (negative to positive) dimensions. Characters exist somewhere within this emotional space, allowing for complex mixed feelings and gradual transitions.

Appraisal theory implementations give characters the ability to evaluate events against their own goals, values, and beliefs. A character who values family might respond with grief to a loss that barely registers for one focused on personal ambition. This creates personality-consistent emotional responses without requiring developers to script every possible reaction.

The Sims pioneered accessible emotion simulation for mainstream gaming. The system tracks multiple emotional states simultaneously, with the strongest becoming dominant and influencing available interactions. A Sim feeling playful and slightly embarrassed behaves differently than one feeling playful and confident. Simple? Yes. But it demonstrated that emotional complexity creates compelling gameplay.

Facial Animation: Where Emotion Becomes Visible

Internal emotional states mean nothing if players can’t perceive them. This is where facial animation technology becomes crucial.

Performance capture advanced dramatically over the past decade. L.A. Noire made facial accuracy a core gameplay mechanic reading suspect emotions became central to interrogation sequences. The technology sometimes created uncanny valley effects, but it proved that nuanced expressions could carry narrative weight.

Red Dead Redemption 2 took a different approach, blending captured performances with procedural systems that added contextual emotional flourishes. Characters react to cold with visible discomfort. They show wariness around people who’ve wronged them. These small touches accumulate into something remarkably convincing.

Procedural facial systems generate expressions algorithmically based on emotional states rather than replaying captured footage. This allows for infinite variation and context-sensitivity but requires sophisticated rigging and careful tuning to avoid looking robotic.

The best current implementations combine approaches using performance capture for key story moments while procedural systems handle ambient emotional expression during gameplay.

Games That Get Emotion Right

Detroit: Become Human built its entire narrative around emotional authenticity. The android characters grapple with emerging feelings, and players must read emotional cues to navigate relationships successfully. The technology served thematic purposes perfectly—watching machines learn to feel while controlling their choices created genuinely affecting moments.

Red Dead Redemption 2 remains the high-water mark for ambient emotional expression. Arthur Morgan doesn’t just exist in cutscenes he lives through gameplay. His comments reflect mood. His posture changes based on recent events. The game simulates emotional continuity across the boundary between narrative and play that usually breaks immersion.

The Last of Us Part II pushed facial fidelity to remarkable heights, but more importantly, it gave characters emotional arcs that felt psychologically coherent. The controversial narrative worked precisely because character emotions followed believable internal logic, even when players disagreed with resulting choices.

Even smaller games demonstrate sophisticated emotional thinking. Spiritfarer made players genuinely grieve for departing characters because their personalities and emotional responses felt consistent and earned over extended relationship-building.

Why This Matters for Players

Emotion simulation directly influences player empathy. We evolved to read faces and respond to emotional cues. When game characters display convincing emotional responses, our social cognition activates whether we consciously recognize it or not.

Studies in player psychology consistently show that emotional authenticity predicts investment more reliably than graphical fidelity. Players remember how characters made them feel long after forgetting technical specifications.

Moral decision making becomes genuinely difficult when characters respond emotionally to choices. Betraying someone who displays hurt rather than just stating displeasure feels meaningfully worse. Games gain ethical weight through emotional consequence.

Relationship systems become more compelling when characters remember emotional context. A companion who still shows subtle wariness after you endangered them creates richer narrative texture than one who forgives and forgets instantly.

The Honest Limitations

We’re nowhere near solving emotion simulation completely. Current systems still produce inconsistencies characters who seem inexplicably calm during crises or overreact to minor events. The gap between cutscene emotional fidelity and gameplay expression remains noticeable in most titles.

Cultural differences complicate universal emotional reading. Expressions that communicate clearly to Western audiences might confuse players from different cultural backgrounds. Building culturally-aware emotion systems presents significant design challenges.

Processing costs limit simulation complexity in open world games. Simulating deep emotional states for hundreds of NPCs simultaneously exceeds current hardware capabilities for most developers.

Voice acting must align with facial systems, requiring either extensive recording sessions covering emotional variations or synthetic voice technology that still sounds artificial in most implementations.

Where Emotion Simulation Heads Next

Integration with player-responsive narrative systems will likely define the next generation. Characters who emotionally adapt to player choices throughout entire games rather than just at scripted decision points.

Physiological feedback loops interest some developers using heart rate monitors or controller grip pressure to influence how characters respond to players. Your visible nervousness might make NPCs suspicious. Your calm confidence might earn respect.

The technology continues advancing, but the goal remains fundamentally human: creating characters who feel like someone rather than something. When we succeed, games become capable of emotional experiences that rival traditional narrative forms.

Frequently Asked Questions

What is emotion simulation in video games?

Systems that generate character emotional responses based on context and personality rather than simply playing scripted animations at predetermined moments.

Which games have the best emotion simulation?

Red Dead Redemption 2, The Last of Us Part II, Detroit: Become Human, and The Sims 4 represent different successful approaches to character emotional modeling.

How do games make characters’ faces show emotion?

Through combinations of performance capture, procedural animation systems, and sophisticated facial rigging that translates internal emotional states into visible expressions.

Does emotion simulation affect how players make choices?

Significantly. Research shows players make different moral decisions when characters respond with visible emotional consequences rather than simple acknowledgment.

Will future games have even more realistic character emotions?

Almost certainly. Advancing technology enables more nuanced expressions, while deeper simulation systems allow for more contextually appropriate emotional response.