Anyone who’s spent late nights hunting down an elusive memory leak or chasing a physics glitch that only appears on specific hardware knows the pain of game debugging. It’s tedious, time consuming, and frankly exhausting. After fifteen years in game development, I’ve watched debugging evolve from printf statements and breakpoints to something far more sophisticated. The latest shift? Intelligent debugging tools powered by machine learning that are genuinely changing how we squash bugs.

The Debugging Problem in Modern Game Development

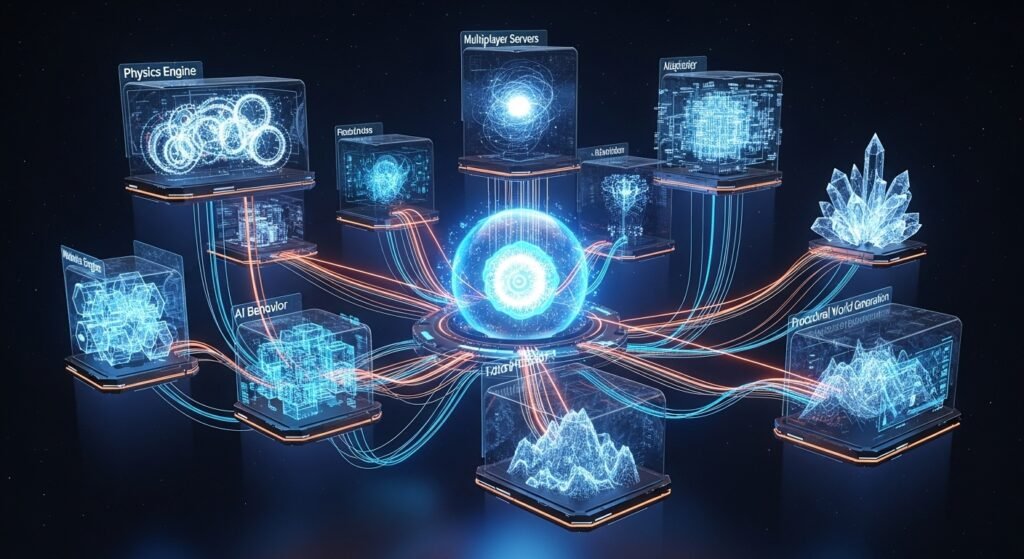

Games have become absurdly complex. We’re talking millions of lines of code, interconnected systems, real time physics, networked multiplayer, and procedural content generation all running simultaneously. Traditional debugging methods struggle to keep pace.

I remember working on a mid sized RPG project where a single bug a character occasionally falling through the terrain took our team three weeks to isolate. The issue only manifested under specific conditions involving a particular animation state combined with certain terrain geometry. Manual testing simply couldn’t reproduce it reliably.

This is exactly where intelligent debugging solutions shine.

What AI Debugging Tools Actually Do

Let me cut through the marketing hype. These tools essentially use pattern recognition and predictive analysis to identify potential issues before they become critical problems. They analyze code behavior, detect anomalies in runtime data, and correlate crashes with specific code paths far faster than human developers can.

The best tools in this space aren’t replacing programmers. They’re augmenting our capabilities by handling the grunt work of data analysis while we focus on actually fixing problems.

Automated Log Analysis

Modern debugging platforms ingest massive quantities of log data and automatically flag unusual patterns. Rather than manually scanning through thousands of log entries, developers receive prioritized lists of potential issues ranked by severity and frequency.

Tools like Backtrace and Sentry have incorporated machine learning models that learn from historical crash data. They group similar crashes together, identify root causes, and even suggest likely fixes based on how similar issues were resolved previously.

Visual Debugging and Behavior Analysis

For gameplay bugs, visual debugging tools have become invaluable. Solutions like Unity’s ML Agents debugging interface or custom tools built on TensorFlow allow teams to visualize NPC behavior, identify pathfinding failures, and detect when game systems deviate from intended parameters.

I worked with a studio last year that implemented visual debugging for their stealth game’s enemy AI. The system highlighted moments when guard patrol patterns created unintended gaps or overlaps, issues that would’ve taken months to surface through conventional playtesting.

Predictive Crash Prevention

This is where things get genuinely exciting. Some platforms now predict crashes before they happen by analyzing code patterns and comparing them against databases of known problematic structures. Microsoft’s IntelliCode and similar systems flag potentially dangerous code during development, catching null pointer risks and memory issues before they reach production.

Real World Implementation: What Actually Works

Let’s talk practical application. After experimenting with several solutions across different projects, here’s what I’ve found effective:

Crash Analytics Platforms: Backtrace, Sentry, and GameAnalytics have become essential for live games. They automatically symbolicate crash dumps, group related issues, and provide actionable insights. One mobile game I consulted for reduced their crash rate by 40% within two months simply by implementing proper crash analytics and acting on the prioritized issues.

Static Analysis Tools: PVS Studio and Coverity incorporate intelligent pattern matching to catch bugs during code review. They’re not perfect false positives remain an issue but they catch genuine problems that would otherwise slip through.

Automated Testing Frameworks: Tools like Functionize and Testim use intelligent automation to create and maintain test suites. They adapt to UI changes automatically, reducing the maintenance burden that traditionally made automated testing impractical for games.

Limitations Worth Understanding

I’d be doing you a disservice if I painted these tools as silver bullets. They’re not.

Machine learning models require training data, which means they perform poorly on novel bug types they haven’t encountered before. They also generate false positives that waste developer time. One team I know disabled their intelligent code analysis because the noise to signal ratio became unbearable.

Additionally, these tools work best for reproducible, systematic bugs. The weird edge cases the physics glitch that only happens when the player jumps while opening inventory during a cutscene on hardware with specific GPU configurations still require human intuition and persistence.

There’s also the cost factor. Enterprise-grade debugging platforms aren’t cheap, and smaller indie studios often can’t justify the expense. Open source alternatives exist but typically require more technical expertise to implement effectively.

Ethical Considerations and Data Privacy

Something rarely discussed: many debugging tools collect significant user data. Crash reports, gameplay telemetry, and hardware information all flow to external servers. Studios need to consider GDPR compliance, user consent, and data security when implementing these systems.

I’ve seen projects struggle with this balance wanting comprehensive debugging data while respecting player privacy. The solution usually involves anonymizing data effectively and being transparent about collection practices.

Looking Forward

The debugging landscape continues evolving rapidly. We’re seeing early implementations of tools that suggest code fixes automatically, not just identify problems. GitHub Copilot and similar assistants already help with debugging by explaining error messages and suggesting solutions.

The integration of debugging tools with development environments is becoming seamless. Real time analysis during coding, instant feedback on potential issues, and automated regression testing are becoming standard expectations rather than premium features.

Making the Right Choice for Your Team

Selecting debugging tools depends heavily on your project’s specific needs. A live service multiplayer game has different requirements than a single player indie title. Consider your team’s technical capabilities, budget constraints, and the types of bugs you most commonly encounter.

Start small. Implement crash analytics first it provides immediate value with minimal setup. Then gradually adopt more sophisticated solutions as your needs evolve and your team becomes comfortable with the workflow changes.

Frequently Asked Questions

Are AI debugging tools worth the investment for indie developers?

Free tiers of platforms like Sentry provide substantial value for smaller projects. You don’t need enterprise solutions to benefit from intelligent crash analysis.

Can these tools replace QA testers?

Absolutely not. They complement human testers by automating repetitive analysis tasks, but creative testing and player experience evaluation require human judgment.

How steep is the learning curve?

Most modern platforms prioritize usability. Basic implementation takes hours, not weeks. Advanced customization requires more expertise.

Do these tools work with all game engines?

Major platforms support Unity, Unreal, and custom engines. Check specific compatibility before committing.

What data do debugging tools typically collect?

Crash dumps, stack traces, device information, and sometimes gameplay context. Review privacy policies carefully.

How accurate are automated bug detection systems?

Accuracy varies significantly. Expect 70-85% useful results from mature platforms, with some false positives requiring manual filtering.